Proof of Quality: Who Verified the Data That Trained Your Model?

Since the start of the 2025 school year, Austin school officials have recorded 19 instances of autonomous vehicles blowing past school bus stop signs while children were boarding or exiting.

One incident occurred moments after a student crossed in front of the vehicle, while the child was still in the road. Red lights flashing. Stop sign arm extended. The car kept moving.

One operator issued a software recall covering 3,067 vehicles. Two days after the fix was deployed, another violation was recorded.

This wasn't a sensor failure. The cars could see the buses. The AI simply wasn't trained to respond correctly. Somewhere in the pipeline that created these systems, in the millions of labeled images and thousands of evaluations that shaped their behaviour, something was missed. And here's the part that should concern everyone building AI: there's no way to trace back to what went wrong.

No auditable record of who made the critical judgments. No proof of what quality standards were applied. No way to verify that the contributors and validators were qualified, attentive, or unbiased.

Just hope that they got it right.

This Isn't an Isolated Problem

In October 2023, a robotaxi in San Francisco failed to recognise a pedestrian underneath it after a collision. Following its programming, the vehicle attempted to pull over, dragging the woman 20 feet. Investigators found that the system had "lost tracking of and then 'forgot' the pedestrian."

Georgia Tech researchers found that pedestrian detection models are roughly 5% less accurate at identifying people with darker skin tones. All 24 top performing detection methods on standard benchmarks have higher miss rates on children. These aren't bugs. They're the predictable result of training data that wasn't representative and evaluations that didn't catch the gaps.

NewsGuard reports that hallucination rates in major AI chatbots nearly doubled in one year, from 18% to 35% for news related queries. Legal researchers have documented over 800 instances of AI models inventing non-existent court cases, complete with fabricated legal reasoning. One lawyer submitted six fake precedents from ChatGPT in a federal court brief.

Air Canada was ordered to pay damages after its chatbot confidently cited a bereavement fare policy that didn't exist.

In each case, the failure traces back to the same root: unverifiable quality signals. The systems were shaped by evaluations, preference rankings, safety ratings, and quality assessments that left no auditable trail. You can see the result (a car that ignores a stop sign, a model that hallucinates, a chatbot that invents policy) but you can't prove the process. No independent way to know who made the judgment, how it was made, or whether it should have been trusted.

The Hidden Layer

In May 2024, 97 data labellers in Nairobi wrote an open letter to President Biden describing their work: watching "murder and beheadings, child abuse and rape, pornography and bestiality, often for more than 8 hours a day" for less than $2 an hour. These are some of the people making the judgments that shape how AI models behave.

This isn't meant as criticism of these workers. They're doing difficult, necessary work under difficult conditions. The point is that you have no idea who is making the judgments that train your models. Were they qualified for the domain? Were they rushing to meet quotas? Were they gaming the system for payment? Were they just agreeing with whatever the model said to finish faster? Were they bringing cultural assumptions that don't match your use case?

Research shows labellers exhibit anchoring bias, confirmation bias, and information bias. Information bias is particularly insidious: labellers consistently rate verbose responses as "better" even when shorter answers are more accurate. Why? More words pattern match to effort, thoroughness, expertise. We're wired to associate quantity with quality. AI models learn this bias too, which is one reason they tend toward verbose, hedging responses rather than direct ones. These biases compound. They get baked into models. And when something goes wrong downstream, there's no way to trace it back to the specific judgments that caused it.

Every AI company faces this. Every model trained with human feedback inherits this problem. The quality layer that determines whether your AI is safe, accurate, and reliable is fragmented, inconsistent, and fundamentally unverifiable.

We've Lived This

Sapien has spent >2 years and 195 million tasks in the AI data quality trenches. Labelling, evaluation, RLHF, preference data. We've worked with enterprise clients building autonomous vehicles, generative AI, and robotics systems. We've seen what happens inside the black box of quality assurance.

We tried reputation systems. They're free to build and cheap to burn. A bad actor can grind up a high score over weeks, then cash out on one high-value malicious task and disappear before anyone notices.

We tried internal QA. You end up sampling from a process you can't see, layering reviewers on reviewers. Subjective calls get escalated. Disputes pile up. The process gets slower and more expensive, and at the end you still can't prove any of it was right. Just internal agreement and hope.

None of it solved the core problem. The quality signal you need? It's locked in a vendor's system, a labelling company's dashboard, somewhere you can't reach. You can see what your AI does. You can't prove why it does it. And no one who made the judgments that shaped it had anything real on the line.

Proof of Quality fixes this.

What Proof of Quality Actually Is

PoQ is an open protocol for verifying that something is true, correct, acceptable, or trustworthy. It creates an auditable trail of why a specific piece of data, output, or action was deemed quality, providing a quantifiable signal for both subjective and definitive judgments.

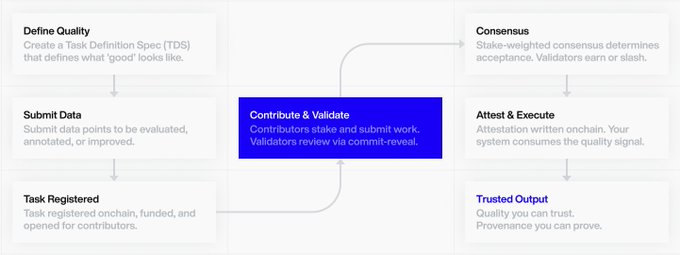

The core loop is simple:

Contributors stake capital and do work: evaluate outputs, verify claims, provide chain-of-thought reasoning, annotate data.

Validators stake and review that work independently. They submit their assessment as a cryptographic hash before seeing anyone else's answer. No copying, no herding, no "I'll just agree with the majority."

When validators reveal their assessments and reach consensus, that agreement is the quality signal. Not a dashboard metric. Not an internal QA score. Genuine independent agreement from participants with skin in the game.

Get it right, earn. Get it wrong, get slashed.

The result gets recorded onchain. Not the data itself (your data never leaves your systems), just the attestation: who created it, who validated it, what consensus was reached, and why.

Human or AI: PoQ Doesn't Care

Contributors and validators can be human, AI agents, or a mix of both. PoQ is agnostic. The protocol cares about one thing: determining what's true or false, right or wrong, fact or fiction.

If your use case requires human judgment specifically (safety evaluations, subjective preferences, domain expertise), you specify that in your task definition. If you're validating agent outputs or running automated QA, AI participants work just as well. The consensus mechanism and economic incentives function identically either way.

What matters is the verifiable trail: who or what made the judgment, how consensus was reached, and whether the output can be trusted.

You don't have to trust anyone. The economics do the work.

Why This Works: The Game Theory

Traditional quality systems fail because the incentives are wrong.

Reputation is cheap. It costs nothing to build and nothing to burn. You can accumulate a high score over time, then exploit it for one high-value malicious action with no lasting consequence.

Payment-per-task creates the wrong optimisation. When workers are paid to complete tasks quickly, they optimise for speed over accuracy. Quality becomes someone else's problem.

Internal QA is expensive and circular. You're sampling from a process you can't fully observe, using reviewers whose own judgments are unverified.

PoQ changes the game by making every judgment carry real cost and real upside.

Stake Creates Accountability

When contributors and validators stake capital, gaming the system means losing money, not just losing reputation points. The higher your confidence in a judgment, the more you can stake. The more you stake, the more you stand to earn if you're right, and the more you lose if you're wrong.

This creates a natural sorting mechanism. Participants who are genuinely good at evaluation stake more and earn more. Those who are guessing or gaming stake less and get filtered out over time.

This creates a self-cleaning system. Bad data doesn't just get flagged, it gets economically penalised. Contributors who submit low-quality work get slashed. Validators who approve bad work get slashed. The incentives actively push bad data out while surfacing a verifiable quality signal for what remains.

Commit-Reveal Prevents Collusion

Validators submit a cryptographic hash of their assessment before anyone else's answer is visible. Only after all commits are in do validators reveal their actual judgments. The protocol verifies each reveal matches its commit.

This means validators can't copy each other. They can't wait to see which way consensus is trending and pile on. When agreement emerges, it's genuine independent judgment, which is the whole point of having multiple reviewers in the first place.

This prevents copying, but not scale attacks. What stops someone from spinning up a thousand validators? Economics and earned skills. We address those below.

Consensus Replaces Authority

Traditional QA relies on designated experts whose judgments are treated as ground truth. But expertise is hard to verify, and experts can be wrong, biased, or compromised.

PoQ treats consensus among staked validators as the quality signal. If multiple qualified participants with skin in the game independently agree, that agreement carries more weight than any single authority. Disagreement gets surfaced rather than hidden, which is often where the most valuable signal lies.

Finding the Right Balance: Consensus Models

There's real tension in designing these mechanisms, and getting the balance right matters.

One challenge is preventing whales from dominating consensus. If someone can stake enough to overwhelm other validators, the system becomes pay-to-win rather than merit-based. But if you cap how much influence any single validator can have, you also cap their upside, which weakens the incentive to participate with conviction.

We're actively modelling several approaches:

Linear caps limit each validator to a percentage of the total stake in any quorum. Simple, secure, but potentially blunts the game theory.

Quadratic weighting diminishes the marginal influence of additional stake. More elegant, but harder to model and potentially opens new attack vectors.

Dynamic lottery selection accepts more validators than needed, then randomly selects from the pool weighted by reputation. Adds unpredictability that makes gaming harder.

Top-by-stake selection takes the validators who staked the most as the consensus committee, reasoning that highest stake equals highest confidence equals most reliable signal.

We need real-world usage to know which model works best for which use cases. A simple hash verification might need fast, lightweight consensus. A safety-critical evaluation might need deeper redundancy with stronger guarantees. This is exactly the kind of tuning we're doing with design partners right now.

If this kind of mechanism design interests you, we'd love to build with you.

Skills: Verified Expertise

Not everyone is qualified to validate every type of work. Medical data requires medical knowledge. Legal evaluations require legal expertise. Code review requires programming ability.

PoQ uses a skills system that gates access to specific task types. Add skills to your validators to unlock specific areas of expertise. Build your own skills to gain a real edge.

Validators earn skills by first being contributors, doing the work themselves and having their contributions validated by others. This creates a natural progression: prove you can do the work before you're trusted to evaluate others' work.

Task originators can specify skill requirements when they create tasks. If you're running safety evaluations for a medical AI, you can require that validators have demonstrated medical domain expertise. If you're validating code, you can require programming skills.

This also functions as Sybil resistance. Creating fake accounts is easy; earning real skills through verified work is hard. Combined with staking requirements, the barrier to gaming the system becomes substantial.

The Technical Architecture

PoQ is designed to plug into existing workflows, not replace them. The architecture has four layers:

TDS (Task Definition Standard)

TDS is an open schema for describing any task that requires quality verification. It defines what's being evaluated, what "good" looks like, how many validators are needed, what skills are required, and what agreement means.

When you create a task, the TDS is your chance to stipulate your quality requirements. Want human-only validation? Specify it. Need domain expertise? Require specific skills. The schema is simple JSON that most teams can map to from existing formats.

PoQ Engine

The engine is the heart of the protocol. It handles:

- Redundancy: collecting multiple independent assessments per task

- Consensus: determining agreement and flagging disagreement

- Scoring: producing a quality score weighted by stake and reputation

- Staking/Slashing: managing the economic incentive layer

- Attestations: recording provenance onchain without exposing your data

Your raw data stays in your systems: S3, GCS, wherever you keep it. Only the cryptographic attestation goes onchain. Proof of who created the data, who validated it, and what consensus was reached.

Task API + SDK

A simple API for submitting tasks, retrieving results, and fetching attestations. JavaScript and Python SDKs for easy integration. Any client can interact with PoQ. Our own interface is just one option.

Adapters

The distribution layer. Adapters map existing tools into PoQ so you don't have to change your workflow.

Your tool emits a task. The adapter maps it to TDS format. PoQ processes it. An attested result comes back. No migration required. You keep using the tools you already use.

Early adapter targets include agent frameworks, model evaluation suites, and RLHF pipelines: anywhere quality judgments shape AI behaviour.

What This Enables

For safety-critical applications

Robotics, autonomous vehicles, medical AI: anywhere the stakes are physical, not just digital. PoQ provides the audit trail that regulators will increasingly demand. Proof of the quality process, not just the quality claim.

For teams building agents

When your agent needs a quality check, a safety gate, or a decision point, you get verifiable proof that the check actually happened and who or what made it. The difference between "our internal process approved this" and "here's the cryptographic proof of independent consensus."

For teams doing RLHF and preference data

Every preference judgment becomes traceable. When your model behaves unexpectedly, you can audit back to the evaluations that shaped that behavior. Not just "the training data said X" but "these specific validators, with this stake, reached this consensus."

For annotation and evaluation teams

Visual annotation, bounding boxes, COCO-format outputs: the foundational work that AI systems depend on. PoQ adds verifiable quality to the outputs you're already producing.

Where We Are

Alpha testnet is live. Smart contracts are deployed. Design partners are helping us figure out what works.

We're running PoQ inside real pipelines, validating model responses, improving reinforcement loops, building the feedback systems that will help us understand what works and what doesn't.

We haven't figured all of this out yet: consensus mechanism tuning, the right balance between security and incentive strength, which integration surfaces give the fastest learning loop. These are questions that get answered in production, not in theory. If you have thoughts on any of these, we'd love to hear them.

What's Coming

Now: Alpha testnet, smart contracts deployed, design partners stress-testing our assumptions. First integration focused on visual annotation and bounding box validation.

Next: Testnet access expanding beyond initial partners.

Q2: V1 mainnet. First public integrations. JavaScript and Python SDKs.

Future: LangChain/LangSmith adapters for native agentic integration. Multi-modal data validation beyond visual annotation. ZK proof-of-identity for qualification gating. Multichain support. And eventually, fully open source servers, oracles, and frontends.

An Invitation

We're building this in public because we think the problem is too important to solve in isolation. The people who understand data quality problems most deeply are the ones living with them every day: building AI systems, running annotation pipelines, trying to figure out why their models behave unexpectedly.

We're actively onboarding design partners. Not customers. Partners. People who can tell us what "good" looks like for their specific use case. What does that mean? It means helping us understand the problems you're facing. Ultimately, helping us build something genuinely useful rather than theoretically elegant. The more perspectives we have steering decision making, the more likely we are to build something that actually works.

If you're working on AI pipelines where "trust me, bro" isn't good enough, where you need to prove your data quality, not just hope, we want to build with you.

build@sapien.io

Don't trust. Verify.

Sources

Autonomous vehicle school bus incidents: NPR, Car and Driver

Robotaxi pedestrian incident: Road and Track

Pedestrian detection bias: Georgia Tech, arXiv

Hallucination rates: NewsGuard via VKTR

Hallucinated legal cases: Charlotin Database

Air Canada chatbot: MIT Sloan

Nairobi data labelers: Privacy International

Labeler cognitive bias: iMerit, MIT Press